Deepfake scams in 2026 are becoming more convincing, more personal, and more dangerous than ever before. Artificial intelligence tools can now generate realistic voices, fake video calls, cloned audio messages, and manipulated visuals that are difficult to recognize at first glance.

A few years ago, many fake videos looked obvious. Today, scammers can imitate family members, company executives, celebrities, or customer support agents with alarming realism. In many real-world situations, the scam is not successful because the technology is perfect. It works because people react emotionally before verifying what they are seeing or hearing.

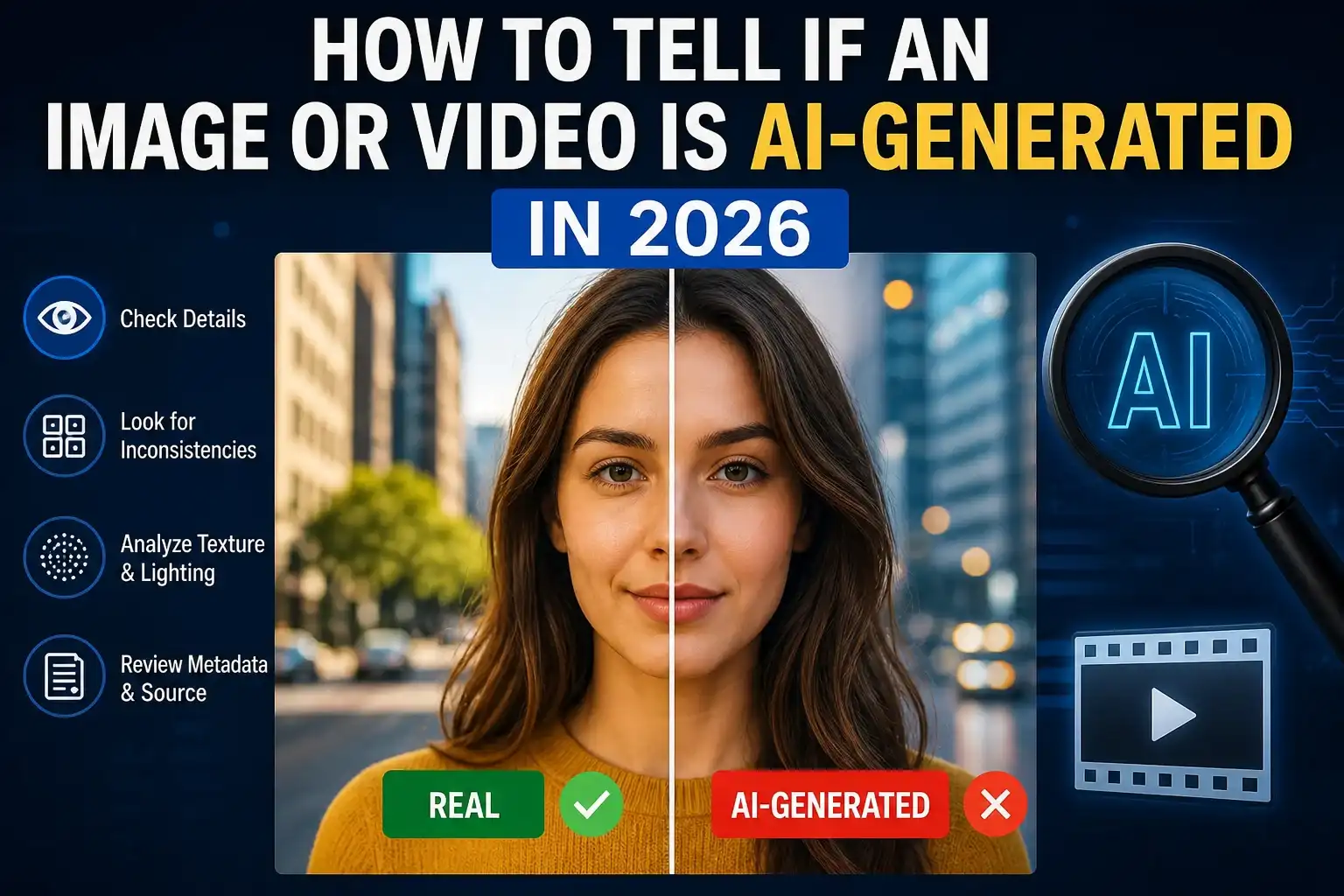

That is why digital awareness matters more than ever. Learning how to verify suspicious content is becoming just as important as learning basic online safety habits. Readers who already understand how to verify an image or video before sharing it online will recognize many of the same verification principles discussed in this guide.

This article explains how deepfake scams work in 2026, the most common scam methods, warning signs to watch for, and practical ways to protect yourself and your family online.

What Are Deepfake Scams?

Deepfake scams use artificial intelligence to create fake audio, video, or images that imitate real people. The goal is usually to:

- steal money,

- gain trust,

- spread misinformation,

- or manipulate victims emotionally.

Modern AI tools can clone:

- voices,

- facial expressions,

- speaking patterns,

- and even live video behavior.

For example, a scammer may use a short social media video to clone someone’s voice and create a fake emergency call asking for money.

This technology is evolving quickly because AI tools are becoming easier to access. Companies like OpenAI, Google DeepMind, and Microsoft continue researching AI safety and watermarking systems to reduce misuse. Resources such as C2PA and Content Credentials are also working on authenticity standards for digital media.

Why Deepfake Scams Are Growing in 2026

Several factors are driving the increase in deepfake scams in 2026.

AI tools are more accessible

A few years ago, creating realistic AI-generated media required advanced technical skills. Today, many tools can generate convincing content within minutes.

Social media provides training material

Scammers can collect:

- voice clips,

- photos,

- videos,

- and speaking habits

from public social media accounts.

A common mistake people make is oversharing personal content online without realizing how easily it can be reused.

People trust familiar faces and voices

Humans naturally trust recognizable people. A cloned voice that sounds like a family member can create panic before logic has time to react.

This is similar to how misinformation spreads online. Readers familiar with how to spot fake news before you share it already understand how emotional reactions can bypass careful thinking.

Common Types of Deepfake Scams in 2026

1. Voice Cloning Family Emergency Scams

This is one of the fastest-growing scams.

A victim receives a phone call from someone who sounds exactly like:

- their child,

- spouse,

- sibling,

- or friend.

The caller claims there is:

- an accident,

- kidnapping,

- legal trouble,

- or urgent emergency.

The scammer pressures the victim to send money immediately.

In real-world situations, panic is what makes these scams effective. Many people react emotionally before verifying the story.

2. Fake CEO or Business Executive Scams

Businesses are increasingly targeted with AI-generated executive impersonations.

A scammer may:

- clone a manager’s voice,

- send fake video messages,

- or request urgent financial transfers.

Some attacks even imitate internal meetings or video calls.

This is why many companies now train employees to verify unusual requests through secondary communication channels.

3. Celebrity Investment Scams

Fake celebrity videos are frequently used to promote:

- cryptocurrency scams,

- fake investment platforms,

- online trading fraud.

The video may appear to show a celebrity endorsing a financial opportunity, but the footage is manipulated using AI.

A practical way to verify suspicious investment promotions is to compare them with trusted reporting sources and official celebrity accounts.

4. Fake Job Interview Scams

Scammers are increasingly using AI-generated recruiters and fake video interviews.

Victims may be asked to:

- provide identity documents,

- install suspicious software,

- or pay fake onboarding fees.

This is especially dangerous because remote work and virtual interviews are now common.

5. Political and Social Manipulation

Deepfake technology is also used to spread misinformation during:

- elections,

- public controversies,

- breaking news events.

Manipulated videos can be shared rapidly before fact-checkers respond.

That is why editorial verification processes matter so much. Following workflows similar to how we verify news before publishing helps reduce the spread of manipulated content.

Warning Signs of a Deepfake Scam

Deepfake content is improving, but many scams still reveal subtle clues.

Watch for:

- unusual lip-sync timing,

- robotic speech patterns,

- strange pauses,

- unnatural blinking,

- distorted facial movement,

- lighting inconsistencies,

- emotional pressure tactics.

For voice calls:

- urgent requests,

- secrecy,

- panic,

- and money demands

are major red flags.

A common mistake people make is assuming that realistic audio automatically means the person is genuine.

How to Verify Suspicious Videos or Audio

Pause Before Reacting

This sounds simple, but it is extremely important.

Scammers succeed when victims react emotionally instead of logically.

Before sending money or sharing sensitive information:

- slow down,

- verify independently,

- contact the person directly through another method.

Check the Original Source

Ask:

- Where did the content first appear?

- Is the account trustworthy?

- Are reputable news sources reporting the same thing?

This verification process is very similar to the methods discussed in how to verify an image or video before sharing it online.

Use Reverse Search Tools

Tools like:

- Google Lens,

- reverse image search,

- and frame extraction

can help identify whether media has been reused or manipulated.

Look for Provenance Signals

Authenticity systems such as:

are designed to help track how digital content was created or edited.

These technologies are not perfect, but they are becoming increasingly important for media verification.

Practical Ways to Stay Safe From Deepfake Scams

Create Family Verification Questions

Families should establish simple private verification questions that only trusted people know.

For example:

- childhood nickname,

- shared memory,

- personal phrase.

This can quickly expose fake emergency calls.

Limit Public Voice Content

Be careful about uploading long public voice recordings online.

Scammers only need small audio samples to train some cloning systems.

Verify Financial Requests Separately

Never rely only on:

- voice calls,

- video messages,

- or social media DMs

for financial decisions.

Always confirm through another trusted communication channel.

Educate Older Family Members

Older adults are frequently targeted because scammers use fear and urgency.

Simple education about AI scams can significantly reduce risk.

Stay Updated About AI Threats

AI technology evolves rapidly. Following trusted cybersecurity and digital safety resources helps people recognize new scam tactics earlier.

Readers interested in digital safety trends may also find how AI is changing online misinformation useful if available within your content strategy.

Why Human Verification Still Matters

Technology can help detect manipulated media, but human judgment remains critical.

A realistic-looking video does not automatically mean it is real.

The most effective defense combines:

- skepticism,

- source verification,

- emotional awareness,

- and practical digital safety habits.

This is one reason Google continues emphasizing helpful, trustworthy, people-first content in its quality guidance. Educational articles that help readers understand online risks provide long-term value rather than temporary viral traffic.

Real-World Scenario Example

Imagine receiving a late-night phone call from someone who sounds exactly like your brother.

The caller says:

- he was in a car accident,

- his phone was taken,

- and he urgently needs money.

The voice sounds real.

The panic feels real.

But instead of reacting immediately, you:

- pause,

- hang up,

- call your brother directly,

- verify through another family member.

That simple verification step prevents the scam from succeeding.

In real-world situations, small pauses often make the biggest difference.

Frequently Asked Questions

What is a deepfake scam?

A deepfake scam uses AI-generated audio, video, or images to impersonate real people for fraud, manipulation, or misinformation.

Are deepfake scams common in 2026?

Yes. AI tools have become easier to access, making deepfake scams more common and more convincing.

How can I tell if a video is AI-generated?

Look for:

- lip-sync issues,

- unnatural movement,

- strange facial behavior,

- inconsistent lighting,

- and suspicious source accounts.

Can AI clone someone’s voice?

Yes. Modern AI systems can clone voices using surprisingly small audio samples.

What should I do if I receive a suspicious emergency call?

Pause and verify through another communication method before taking action.

Are businesses also targeted?

Yes. Many scams now target companies through fake executive calls and AI-generated business impersonations.

Conclusion

Deepfake scams in 2026 are becoming more sophisticated, but awareness and verification remain powerful defenses. Scammers rely heavily on emotional reactions, urgency, and trust in familiar voices or faces.

The safest approach is simple:

- slow down,

- verify independently,

- and never trust digital media blindly.

As AI-generated content continues evolving, digital awareness will become one of the most important online safety skills for everyday users, businesses, and families alike.

Shiva S writes about AI, cybersecurity, online safety, Google Discover, and digital trends. His focus is creating practical, easy-to-understand guides that help readers stay informed and safer online.